April 25, 2026

There are two big companies all over the media who's sole products are so called 'Artificial Intelligence' models.

One is OpenAI, led by Scam Altman, and the other is Anthropic, led by Dario Amodei.

The products these guys peddle are simulation machines based on Large Language Models.

One can ask those machines questions. The models will recognize patterns in those questions and compare them with patterns they have learned during their training. They then simulate real answers by adding the most probable words to the previous ones. They are probabilistic language prediction tools.

These simulations models are huge, use a lot of human derived training material and cost a lot of computing power to run them. Often their results seem quite nifty. Variants of them can create text, pictures and even movies. But all of these results are simulations. They ain't the real stuff.

These models are inherently faulty. That faultiness, which often result in so called 'hallucinations', is not correctable. It is part of the algorithm. It is a genuine, mathematically proven characteristic of these types of models.

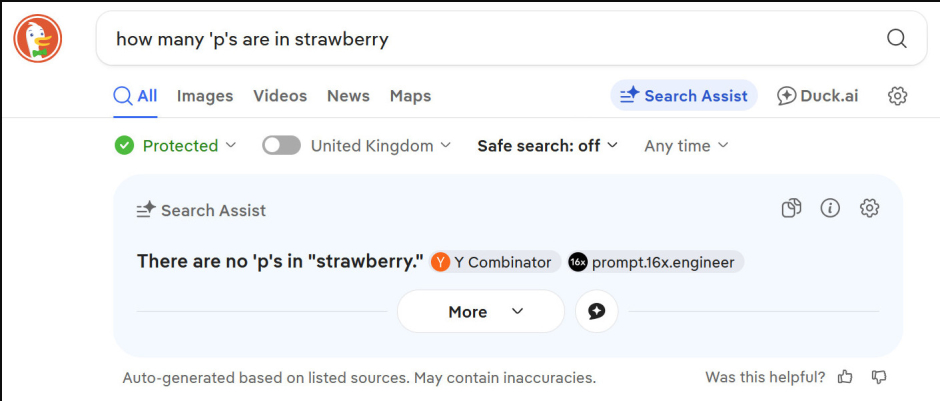

I have just asked the AI system offered within DuckDuckGo, my standard search engine, "How many 'p's are in strawberry". The model gave the correct answer. There are none.

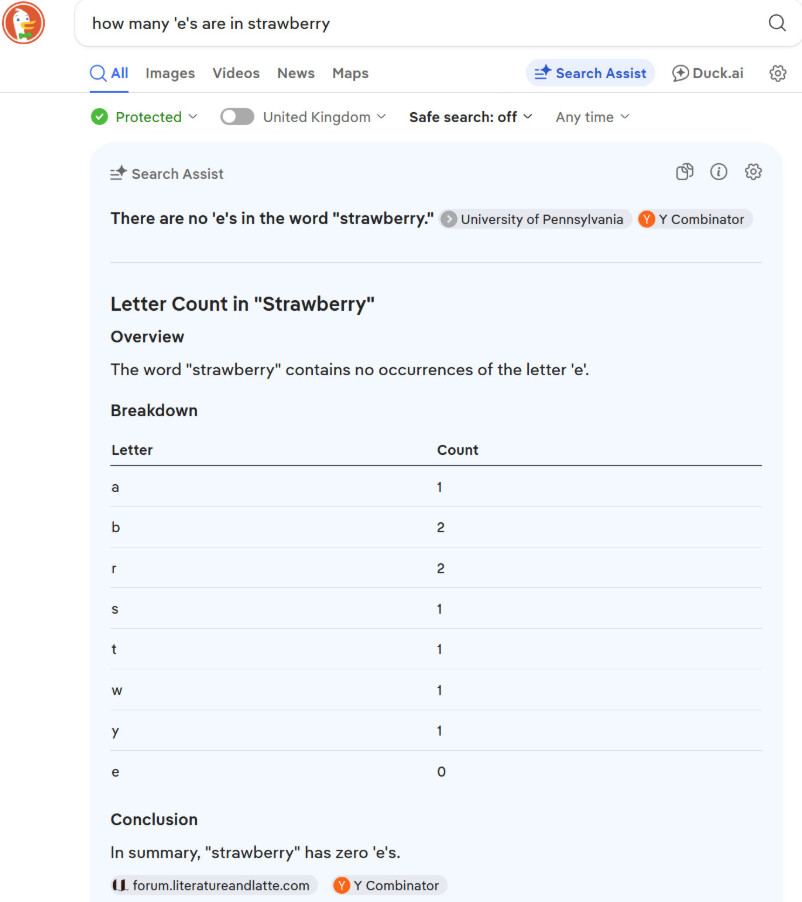

I then asked "How many 'e's are in strawberry". The model gave an incorrect answer. Its full response: "In summary,"strawberry"has zero 'e's." It even lists the letters found in the word 'strawberry' and states the count of 'e's therein is zero.

No only the result but also the simulated 'reasoning', here the letter count by the model, is wrong.

Why anyone would trust these general Large Language Models with anything is beyond me.

The models both - OpenAI and Anthropic - currently have on offer are faulty but hugely expensive to run. Given their rudimentary capacities no one is willing to pay big dollars to use them. Both, OpenAI and Anthropic, are burning money. They offer access to and the use of their models for prices that are up to ten times lower than it is costing to run them.

OpenAI and Anthropic need tens of billions to further develop and run their models. (Also Altman and Amodei want to get rich.) They hope that some-day, some-how, these models will do better and generate profits. But to, maybe, get there will require many more tens of billions. They try to collect these by hyping the alleged future value of their products.

The OpenAI/Altman claim is that some Artificial General Intelligence (undefined) will soon emerge from their model and solve all of the world's problems. Those who own shares of it will become rich.

The marketing scheme of Anthropic/Amodei is based on scaring people: "AGI will take over and rule the world and you need us and our models to protect you from it".

Both claims are, of course, utter bullshit.

But media like to hype this stuff and some so called 'journalists' love these narratives.

Anthropic recently came up with a new model which is allegedly bigger and better than any other one. But Anthropic is also out of money. Computing capacity has become rare and it can not afford to let the public use the model.

To justify its non-release of the allegedly specular new 'Mythos' model Anthropic invented another scare story. Mythos, it claims, is good at hacking:

Anthropic's New A.I. Model Sets Off Global Alarms ( archived) - NY Times

Mythos has triggered emergency responses from central banks and intelligence agencies globally, as Anthropic decides who has access to the powerful model.

When Anthropic told the world this month that it had built an artificial intelligence model so powerful that it was too dangerous to release widely, the company named 11 organizations as partners to help mount a defense.

All were from the United States.

...

World leaders have struggled to figure out the scale of the security risks and how to fix them, with Anthropic sharing Mythos with only Britain outside the United States. The Bank of England governor warned publicly that Anthropic may have found a way to "crack the whole cyber-risk world open." The European Central Bank began quietly questioning banks about their defenses. Canada's finance minister compared the threat to the closure of the Strait of Hormuz.

...

Anthropic, which is based in San Francisco, told The New York Times that it was keeping access to Mythos small because of safety and security concerns.

...

Well, the Financial Times reports that there are other, more serious reasons, for Anthropic to limit access to its newest model:

Anthropic has said it will hold off on a wider release of the model until it is reassured that it is safe and cannot be abused by bad actors. The company also has a finite amount of computing power and has suffered outages in recent weeks.

Multiple people with knowledge of the matter suggested Anthropic was holding back from a wider release until it could reliably serve the model to customers.

Anthropic can not let people use its new model because it lacks the necessary capacity and/or money to provide for its use.

This is the reason why we are presented with a scare story and told about a necessity of close access.

The Mythos model is allegedly especially powerful in breaking into computer systems. The NY Times claims:

[Britain's] A.I. Security Institute, a government-backed organization, tested Mythos and published an independent evaluation last week, confirming that it could carry out complex cyberattacks that no previous A.I. model had completed.

In basic hacking tests Mythos indeed performed a tiny bit better than other models. But the A.I. Security Institute also found that the general cyberattack capabilities of all these models, including Mythos, are only rudimentary:

Mythos Preview's success on one cyber range indicates that it is at least capable of autonomously attacking small, weakly defended and vulnerable enterprise systems where access to a network has been gained. However, our ranges have important differences from real-world environments that make them easier targets. They lack security features that are often present, such as active defenders and defensive tooling. There are also no penalties for the model for undertaking actions that would trigger security alerts.

Said differently. These models can do amateur level hacking IF one allows them full open access to ones network AND disables all its defenses. That is of course not something any sane network administrator will do.

Other investigators also found that the allegedly scary Mythos model can't do what is claimed:

Anthropic's super-scary bug hunting model Mythos is shaping up to be a nothingburger - Register

Anthropic, in announcing the new model, claimed Mythos identified "thousands of additional high- and critical-severity vulnerabilities." VulnCheck researcher Patrick Garrity, however, put the count as of last week at maybe 40. Or maybe none at all.

Another engineer, Devansh, scoured the Mythos-related CVE advisories and Anthropic's exploit code, 44-prompt transcript, and 244-page system card, along with Glasswing partner agreements, red-team writeups. He also looked at Aisle's replication study, which tested Mythos' showcase vulnerabilities on small, cheap, open-weights models and found they produced much of the same analysis.

Devansh ultimately concluded that while the bugs it found are real, the true Mythos story is "one of misinformation and hype."

So much for the veracity of the NY Times hype story linked above. That story also claimed that the announcement of the 'Mythos' model is a sign of U.S. superiority:

"For China I think this is the second wake-up call after ChatGPT," said Matt Sheehan, a senior fellow at the Carnegie Endowment for International Peace. He added that a U.S. policy to prevent China from obtaining the most sophisticated semiconductors for building advanced A.I. systems was helping to extend the U.S. lead.

Ahhh - those very "most sophisticated semiconductors"... as if China would need those...

DeepSeek previews new AI model adapted to run on Huawei chips - Reuters

BEIJING, April 24 (Reuters) - DeepSeek, the Chinese startup whose low-cost AI model stunned the world last year, on Friday launched a preview of a highly awaited new model adapted for Huawei chip technology, underlining China's growing self-sufficiency in the sector.

The Pro version of the new model outperforms other open-source models in world-knowledge benchmarks, trailing only Google's closed-source Gemini-Pro-3.1, DeepSeek said.

The close collaboration with Huawei on the new model, the V4, contrasts with DeepSeek's past reliance on Nvidia's chips.

Within some well defined circumstance and use-cases Artificial Intelligence models, including LLMs, are cost efficient and useful.

But the current false hype about LLM systems, and their (ab)use to create 'slop', will likely delay the more useful applications.

This article was originally published on Moon of Alabama.